NVIDIA Shifts Its Attention to Inference at GTC Following DeepSeek

NVIDIA’s Response to AI Competition

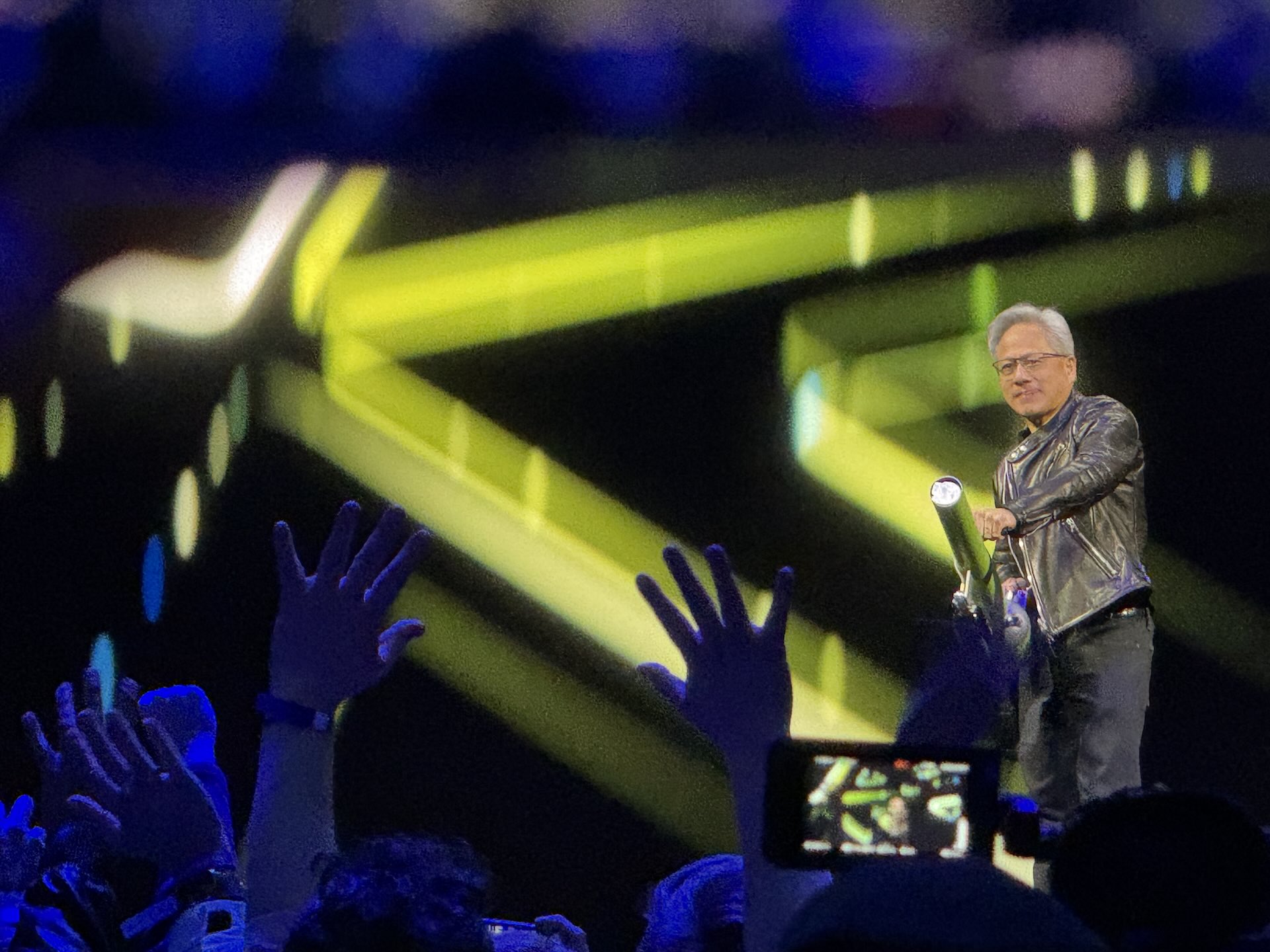

Earlier this year, the AI landscape witnessed a significant shift when DeepSeek revealed a highly competitive reasoning model that required minimal training costs. This advancement caused NVIDIA’s stock to drop sharply, raising questions about the sustainability of large-scale AI hardware investments. At NVIDIA’s annual conference, the GPU Technology Conference (GTC), CEO Jensen Huang addressed these concerns by introducing an array of new hardware and software solutions, emphasizing that future applications will heavily rely on AI.

Growth in AI Computing Demand

NVIDIA expects that demand for AI computing will rise dramatically, estimating a tenfold growth compared to previous projections. To illustrate the increasing need for computational resources, Huang compared Meta’s Llama model with DeepSeek’s R1 model, highlighting how DeepSeek’s approach utilized 150 times more computing power and produced 20 times more tokens. "Inference at scale is extreme computing," Huang explained, underscoring the trade-offs between latency and computing costs involved in AI applications.

A Shift in Software Development

Huang noted a significant shift in software development. The trend is moving away from hand-coded software designed for general-purpose storage to machine learning solutions that rely on accelerators and GPUs. He suggested that businesses would soon need to invest capital in creating "AI factories" that will function alongside their existing physical operations. In this new landscape, software will not just be executed but will generate tokens autonomously.

New Hardware for Developers

For developers seeking to harness the power of AI, NVIDIA introduced two significant systems: the DGX Spark and the DGX Station. The DGX Spark can work alongside existing laptops or desktops, resembling a Mac Studio, while the DGX Station is a robust workstation designed for data scientists, delivering up to 500 teraFLOPS of computational power.

Advancements in Accelerators

To enhance inference speed and reduce data center costs, NVIDIA announced a range of new accelerators, including the Blackwell Ultra family and upcoming chip generations like Vera Rubin and Feynman. These products promise substantial improvements in computing performance and memory bandwidth over their predecessors. Huang humorously positioned himself as the “chief destroyer of revenue” at NVIDIA, indicating that businesses should focus on the latest chips rather than their older models.

Introduction of NVIDIA Dynamo

As part of its push towards improved AI performance, NVIDIA unveiled a new open-source project called Dynamo. This inference software aims to accelerate and scale reasoning models effectively within enterprise data centers. Huang emphasized that different industries are continually evolving their AI training methods, making models more sophisticated. Dynamo is designed to help companies serve these models at scale, which can lead to significant efficiencies and cost savings.

Launching Custom Reasoning Models

In line with its focus on inference capabilities, NVIDIA also announced its own family of reasoning models, named Llama Nemotron. This model is optimized for faster inference speeds and boasts a 20% improvement in accuracy compared to its predecessor, the Llama model.

Overall Reception at GTC

The response to this year’s GTC keynote appeared somewhat less enthusiastic than in previous years. Analysts noted that fewer groundbreaking announcements were made, and while the technical innovations were impressive, they lacked the visionary appeal seen in past events. The overall mood suggested a reaction to growing competition rather than a plan for future breakthroughs.

By addressing the evolving landscape of AI technology and pushing forward with innovative solutions, NVIDIA aims to maintain its leadership position in the industry during this transformative period.