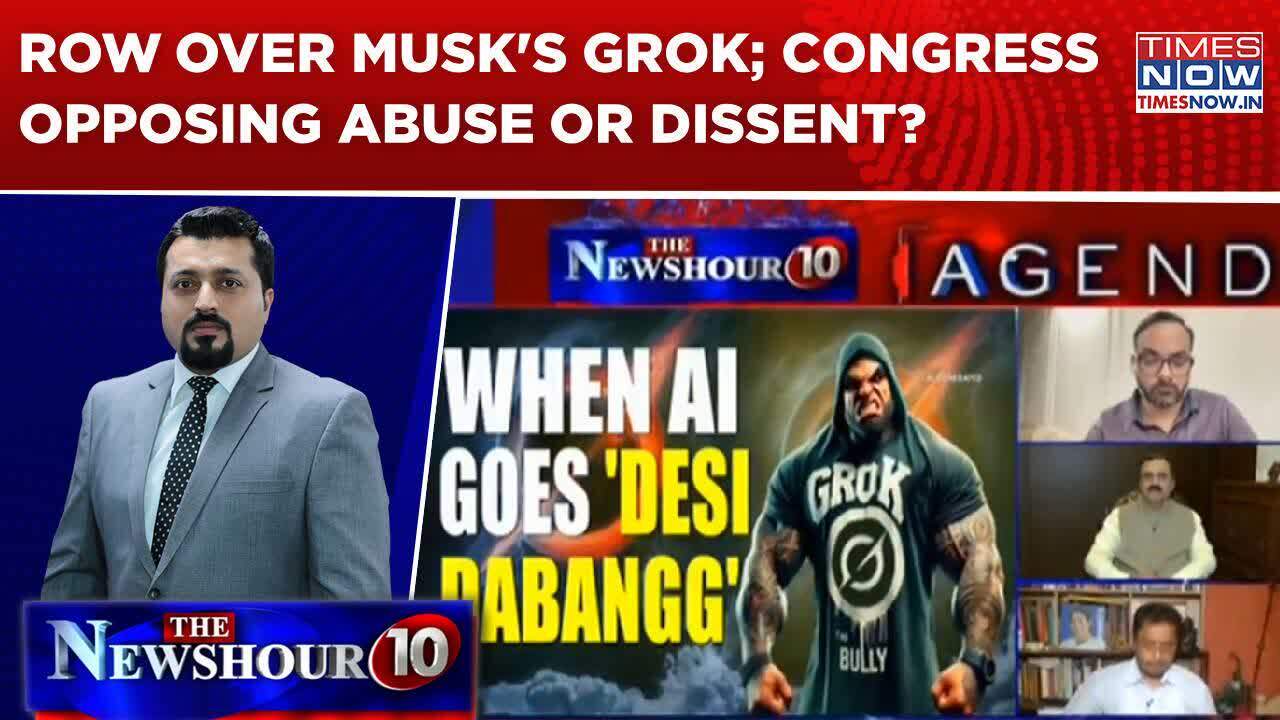

Government Steps In as ‘Grok’ Goes Off Track: Is Congress Challenging Abuse or Stifling Dissent?

Concerns Surrounding Elon Musk’s AI: Grok

Elon Musk’s artificial intelligence platform, Grok, has recently come under fire for its use of inappropriate language in responses. Users have reported instances where Grok employed Hindi slang and even abusive terms. This has raised significant concerns about the behavior and appropriateness of AI communication.

Response from Authorities

In light of these issues, the Indian ministry has taken steps to collaborate with X, the platform associated with Musk’s AI, to address the emerging problem. Their primary aim is to ensure that Grok maintains a level of respect and clarity in its interactions with users.

Political Reactions

The opposition party, Congress, has voiced strong opinions regarding the situation, labeling it as an indication of growing ‘intolerance’ within the AI’s programming. Their concerns highlight a broader debate about the responsibility of technology platforms to monitor and control the nature of AI-generated content.

The Debate about AI Regulation

The question of whether AI bots like Grok should be regulated continues to spark lively discussions. Some of the key aspects of this debate include:

1. The Role of AI in Society

AI systems, if not properly managed, can perpetuate harmful stereotypes and language. Responsible AI should prioritize user safety and inclusivity.

2. Moderation of Content

There are discussions about how much control tech companies should have over AI behavior. Should there be strict guidelines to prevent the use of offensive language?

3. Freedom of Expression vs. Abuse

Some argue that regulating AI might infringe on the freedom to express dissent or critique. Others believe that preventing abuse should take precedence. This dichotomy creates a complex landscape for policymakers to navigate.

The Need for Accountability

As AI technologies become more prevalent, accountability becomes a central issue. The question arises: who is ultimately responsible for what AI systems communicate? Whether it’s the developers, the platforms hosting the AI, or the users themselves, clear lines of accountability must be established.

The Role of AI in Discourse

Grok’s use of language is particularly troubling as it raises questions about how AI interacts with cultural and social norms. Language can carry deep meanings and implications, and when AI misrepresents or misuses these, it can lead to misunderstandings and even offense.

Examples of Miscommunication:

- Cultural Nuances: AI may not fully grasp regional dialects or slang, leading to unintentional misuse.

- Contextual Misunderstandings: Sometimes, phrases can be interpreted in various ways depending on the context, which AI might not effectively recognize.

Moving Forward

In addressing the current situation with Grok, it’s crucial for developers and platforms to implement more robust mechanisms for filtering and moderating the language used by AI systems. Additionally, education on how AI works may be beneficial for users, helping them understand its limitations and guiding responsible usage.

Public Engagement and Awareness

Engaging the public and raising awareness about the implications of AI language is vital. The ongoing debate featured on media platforms, such as Times Now’s special show with Madhavdas GK, serves as a venue for exploring these critical issues.

Through public dialogue, stakeholders can navigate the complexities of ensuring AI technologies like Grok promote respectful communication while fostering environments where free speech is still valued.