New AI Models from Google DeepMind Enable Robots to Carry Out Physical Tasks Without Prior Training

Google DeepMind Introduces Advanced AI Models for Robotics

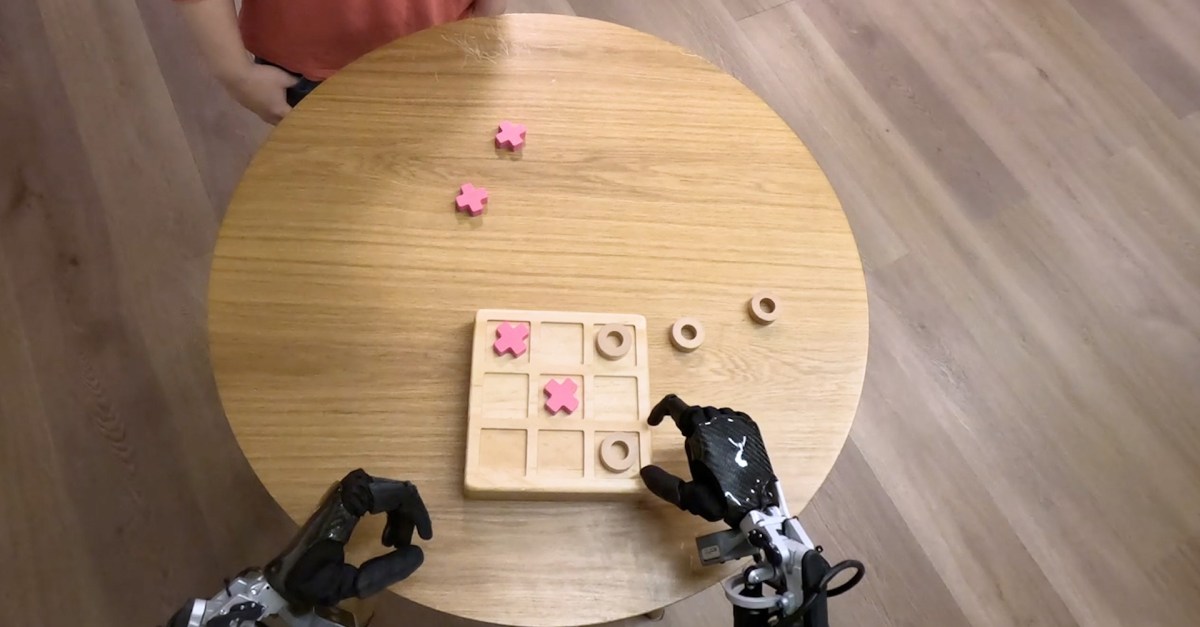

Google DeepMind has recently unveiled two innovative AI models aimed at enhancing robotic capabilities for various real-world tasks. The project, known as Gemini Robotics, leverages cutting-edge technology to significantly expand what robots can accomplish in physical environments.

What is Gemini Robotics?

Gemini Robotics is a vision-language-action model that focuses on understanding and interacting with new situations, even those it has not specifically been trained to handle. This groundbreaking model is based on Gemini 2.0, the latest iteration of Google’s flagship AI technology. According to Carolina Parada, Senior Director and Head of Robotics at Google DeepMind, Gemini Robotics enhances the existing multimodal understanding of the Gemini model by incorporating physical actions as a new dimension of interaction.

Key Advancements of Gemini Robotics

Google DeepMind emphasizes three main aspects of Gemini Robotics that are vital for developing efficient robots:

- Generality: The ability to apply learned knowledge to various NEW scenarios.

- Interactivity: Improved methods for robots to engage with both humans and their surroundings.

- Dexterity: Enhanced skills in completing precise physical activities, which can include tasks like folding paper or opening a bottle.

Parada notes that the new model improves performance across all three dimensions, marking a significant leap in robotics. This integrated approach promotes the creation of robots that are not only more capable but also more adaptable to changes in their environments.

Introduction of Gemini Robotics-ER

In conjunction with Gemini Robotics, Google DeepMind has also announced the release of Gemini Robotics-ER, which stands for Embodied Reasoning. This model is described as an advanced visual language system that can interpret the complexities of our dynamic world.

A practical example provided by Parada illustrates this capability. Imagine packing a lunchbox: a robot utilizing Gemini Robotics-ER would need to assess the table’s layout, identify items, know how to open the lunchbox, and correctly place the items inside. This level of reasoning enhances the robot’s ability to perform everyday tasks effectively.

This model is particularly designed for roboticists, allowing them to integrate it with existing low-level controllers—systems responsible for managing a robot’s movements. This integration is intended to unlock new functionalities powered by the advanced capabilities of Gemini Robotics-ER.

Emphasis on Safety in Robotics

Vikas Sindhwani, a researcher at Google DeepMind, highlighted the importance of safety in their new models. The company is formulating a layered approach to ensure that Gemini Robotics-ER models are trained to assess the safety of potential actions in various situations. Additionally, new benchmarks and frameworks will be introduced to facilitate ongoing safety research within the AI sector. Last year, the introduction of Google DeepMind’s "Robot Constitution" outlined a set of guidelines inspired by Isaac Asimov’s laws for robotics.

Collaborations and Future Prospects

Google DeepMind is collaborating with Apptronik to create the next generation of humanoid robots. Moreover, selected companies are getting early access to the Gemini Robotics-ER model. These trusted testers include notable names in the robotics field such as Agile Robots, Agility Robotics, Boston Dynamics, and Enchanted Tools.

Parada states, "We’re focused on building the intelligence that can understand and interact with the physical world." This dedication hints at a future where robotics can seamlessly integrate into our daily lives, providing valuable assistance in various applications.

Google DeepMind’s efforts signal a significant step forward in robotic technology, making way for smarter, safer, and more interactive robots that can enhance productivity and functionality across multiple sectors.