Attention Studio Ghibli Fans: Understand the Potential Risks of Sharing Your Private and Family Photos with AI

In today’s digital landscape, the use of AI-generated images and videos is becoming increasingly common. As tools like ChatGPT and Grok allow users to create hyper-realistic or stylized digital art, they also raise important questions about the safety and privacy of using AI for personal and family-related imagery. It’s crucial to consider several factors before fully embracing this technology.

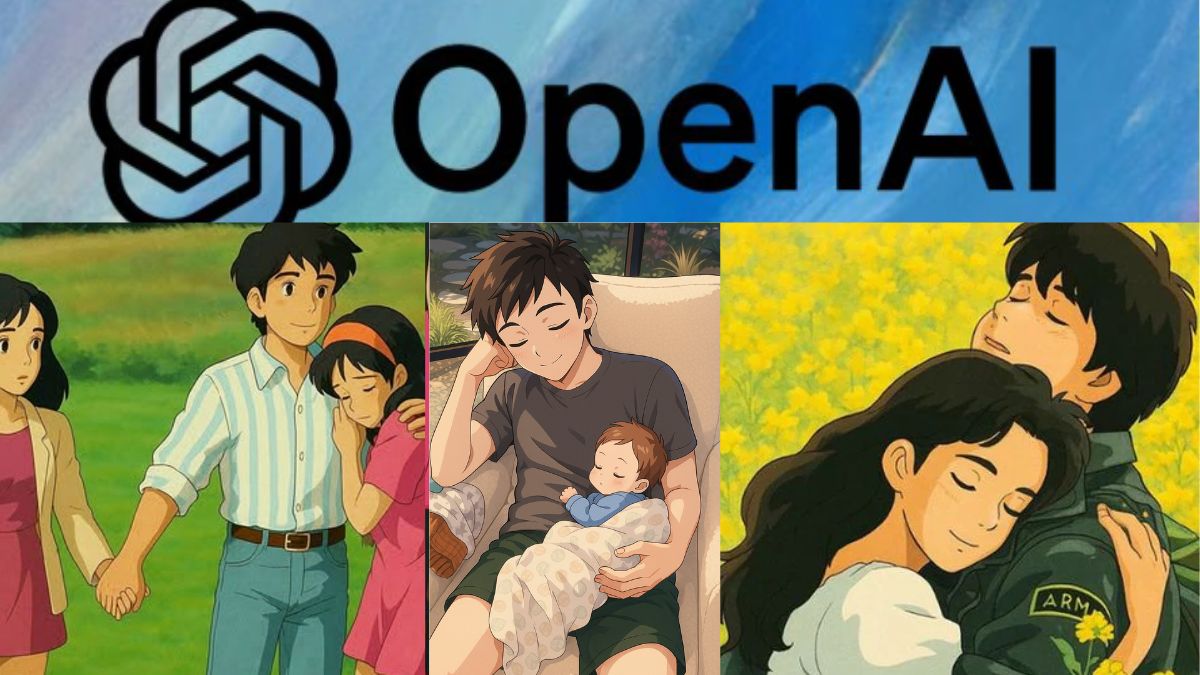

AI-Generated Family Images

Creating AI-generated family portraits, such as those in the style of Studio Ghibli, may seem enjoyable and harmless. However, there are privacy risks involved in this process. Uploading personal photos or descriptions to AI platforms can expose sensitive data. Many users may not be aware that these platforms might store, analyze, or misuse their information, particularly if robust data protection policies are absent. Experts caution that AI systems could retain personal images for further AI training or potentially share them with third parties, leading to unintended consequences.

The Risks of Deepfake Technology

Furthermore, there are significant risks associated with advanced AI-generated images, particularly those created using deepfake technology. This AI capability allows the alteration of faces and expressions, creating highly realistic but entirely fake media. If family images generated by AI are manipulated, they could be used for fraudulent activities, spread misinformation, or even unauthorized advertising. In some cases, such misuse could lead to access to personal financial accounts, creating severe risks for users.

A balance must be struck between creativity and security when employing AI tools like ChatGPT and Grok. While they can foster imaginative digital content, these tools also pose threats to personal data privacy, ownership, and overall security. To prevent misuse, it is essential to adopt protective measures. As AI technology continues to evolve, users need to exercise caution regarding the creation, storage, and sharing of their personal images.

Lack of Transparency in Data Handling

Another pressing concern is the lack of transparency in how data is handled when using AI tools. Many platforms do not clearly disclose their practices regarding the storage and usage of uploaded images. In these cases, personal images could be stored indefinitely or repurposed without the user’s consent. Additionally, AI-generated images are susceptible to manipulation, increasing the risks of deepfakes, identity theft, and other misleading uses. Free or less reputable AI platforms may also present heightened risks of data breaches, which could expose private images and lead to significant privacy violations.

How to Stay Safe While Using AI Tools

Here are some practical tips to help ensure your safety when using AI-generated imagery:

- Consider using AI models that operate locally rather than those that are based in the cloud.

- Review the privacy policy of any platform before uploading personal images.

- Avoid sharing sensitive or private photos with AI tools that do not guarantee data protection.

- Use reputable AI platforms that have clear data security measures in place.

- If possible, delete any images you upload to these platforms once you’re done with the service.