Clearview AI’s Covert $750,000 Effort to Acquire Your Mugshot

Clearview AI and Its Controversial Surveillance Expansion

Clearview AI, a facial recognition company, recently attempted to significantly broaden its surveillance capabilities. According to documents reviewed by 404 Media, the firm sought to acquire hundreds of millions of arrest records that contained sensitive personal information, including social security numbers and mugshots.

Attempts to Acquire Sensitive Data

Notably, Clearview has already gained notoriety for collecting over 50 billion facial images scraped from social media platforms. In mid-2019, the company entered into a contract with Investigative Consultant, Inc. aimed at obtaining around 690 million arrest records and 390 million arrest photos from all 50 states in the U.S. Jeramie Scott, Senior Counsel at the Electronic Privacy Information Center (EPIC), stated that the contract showed Clearview’s intent to acquire not just mugshots but also social security numbers, email addresses, and home addresses.

However, this ambitious effort failed, leading to legal disputes between Clearview and Investigative Consultant. Although Clearview paid $750,000 for the initial data delivery, the company claimed it was “unusable,” resulting in mutual breach of contract claims. Although an arbitrator ruled in Clearview’s favor in December 2023, the company has yet to regain its initial investment and is currently seeking a court order to enforce the arbitration award.

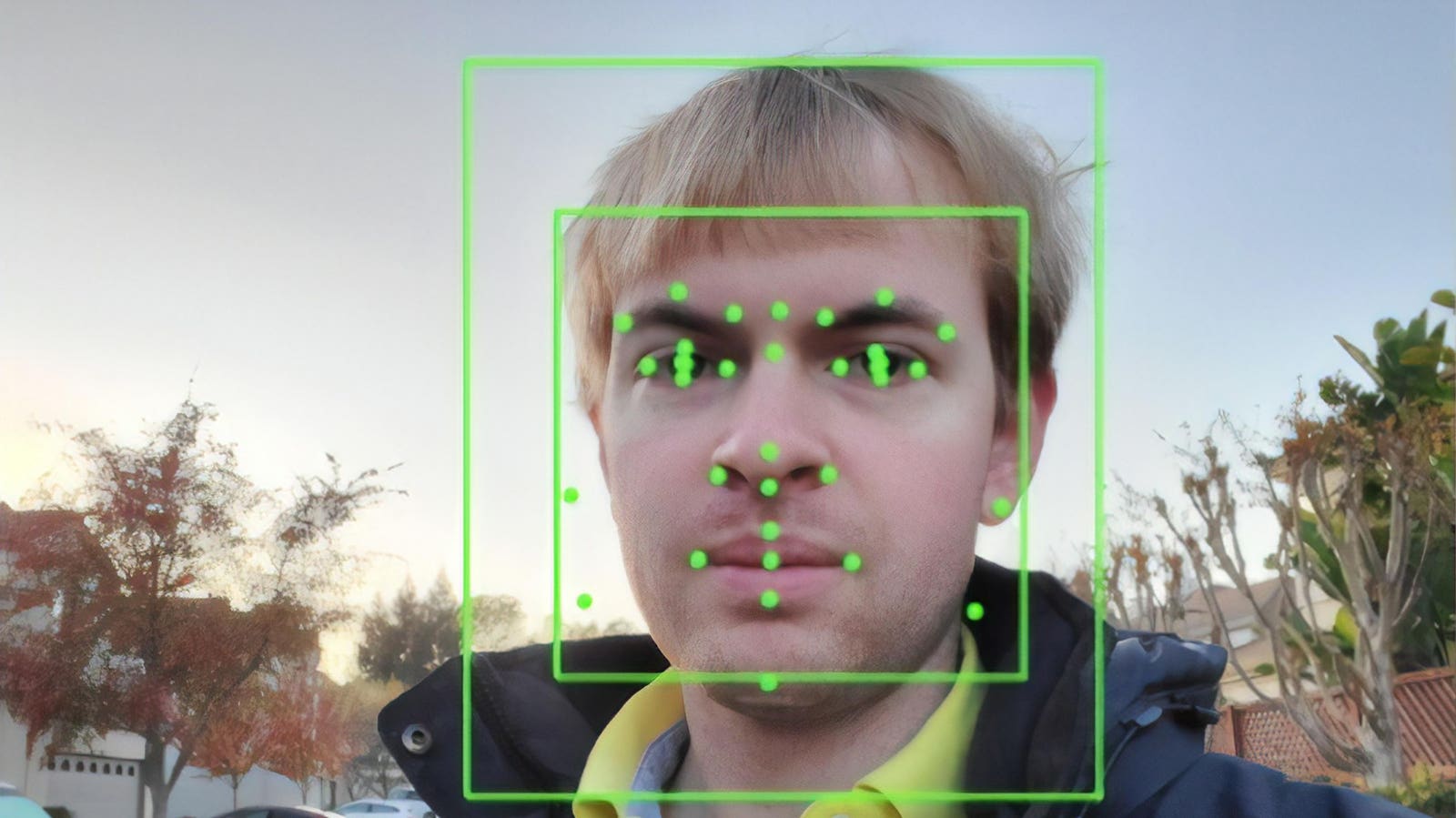

Impact of Facial Recognition Technology

Concerns are mounting over the implications of integrating facial recognition technology with criminal justice data. Scott emphasized that linking individuals to mugshots can lead to biases among those reviewing the data, noting that marginalized communities, particularly Black and brown individuals, are disproportionately affected.

Challenges with Accuracy

Facial recognition systems have faced scrutiny due to documented failures in identifying people with darker skin tones. Numerous cases across the United States have seen innocent individuals wrongfully arrested based on incorrect identifications made by these technologies. In some instances, technology errors have resulted in severe and life-changing consequences for those wrongly implicated in crimes.

As a digital forensics expert, I have seen firsthand how facial recognition technology can err dramatically. For example, in one case involving a felony accusation, the entire case hinged on a single facial recognition match from surveillance footage. My investigation revealed that the defendant was miles away from the crime scene during the relevant timeframe, proving the technology’s misidentification. Such cases highlight the dangers of over-reliance on these tools within the criminal justice system, especially as companies like Clearview seek to collect even more personal data.

Legal and Regulatory Challenges Facing Clearview AI

Clearview AI is navigating a myriad of escalating legal challenges globally. Recently, the company celebrated a victory where it successfully argued against a £7.5 million fine imposed by the UK’s Information Commissioner’s Office, claiming it fell outside the jurisdiction of UK law. However, this is just one battle in a larger ongoing regulatory struggle.

Clearview has faced significant legal penalties for privacy violations in various countries and recently reached a settlement that resulted in the company relinquishing nearly a quarter of its ownership due to alleged violations of biometric privacy laws.

Understanding the Industry Context

Clearview AI’s business model primarily targets law enforcement agencies by selling access to its facial recognition technology. The company asserts that its technology aids in solving cases ranging from homicides to financial fraud. Unlike competitors such as NEC and Idemia, which have adopted more traditional business development methods, Clearview’s aggressive approach of collecting billions of images from social media without consent has drawn significant scrutiny.

The latest revelations regarding Clearview’s intentions to acquire sensitive personal data come amidst increasing pressure for regulation and transparency within the facial recognition sector. As this powerful technology continues to make its way into law enforcement and private security, crucial questions regarding privacy, consent, and algorithmic bias remain at the forefront of public discussions.

Note: The case examples described are based on real events, but names, dates, locations, and specific details have been altered to protect client confidentiality while preserving essential legal principles and investigative techniques.

404 Media reports that Investigative Consultant, Inc. and Clearview did not respond to multiple requests for comment. I have also reached out for comment and will update this article accordingly if a response is received.