DeepMind Plans to Utilize AI Models for Enhancing Physical Robots

Google DeepMind Launches Advanced AI Models for Robotics

On a recent Wednesday, Google DeepMind unveiled two innovative AI models designed specifically for robotics, both of which operate using the Gemini 2.0 framework. Dubbed "the most capable AI" developed by Google to date, these new models are set to revolutionize how robots interact with their environments.

Introduction to Gemini Robotics

The two AI models introduced are Gemini Robotics and Gemini Robotics-ER (Extended Reasoning). Unlike previous generative AI that primarily focused on creating text or images, these models are designed to issue physical action commands. The goal is to enable robots to perform tasks that require manual dexterity and decision-making in real-world scenarios.

Partnership with Apptronik

Google has announced a collaboration with Apptronik, a Texas-based developer of humanoid robots. This partnership aims to create the next generation of humanoid robots utilizing Gemini 2.0 technology. Apptronik has an impressive track record, having worked with renowned organizations such as Nvidia and NASA. Last month, the company successfully raised $350 million in funding, which included investment from Google.

Demonstration of New Capabilities

In a series of demonstration videos, Google showcased robots from Apptronik equipped with the new AI models. These robots performed various tasks such as plugging devices into power strips, filling lunch boxes, and moving plastic vegetables, all in response to voice commands. However, Google has not yet announced when these robotics advancements will be commercially available.

Key Qualities of the New AI Models

Google emphasizes that for AI models to be effective in robotics, they must exhibit three essential qualities:

- General Adaptability: The models need the ability to adapt to different situations seamlessly.

- Interactivity: They must quickly understand and respond to verbal instructions or changes in their surroundings.

- Dexterity: The models should be able to manipulate objects similarly to how humans use their hands.

Gemini Robotics-ER for Developers

The Gemini Robotics-ER model is tailored for engineers and developers to create and train their own robotic models. Google has opened access to trusted testers in the robotics field, including companies like Agile Robots, Boston Dynamics, and Enchanted Tools.

Broader Context in Robotics

Google is not the only tech giant exploring AI for robotics. In November, OpenAI made news by investing in a startup named Physical Intelligence, which focuses on applying large-scale AI algorithms to enhance robotics. On the same day that OpenAI announced its investment, it also hired the former leader of Meta’s Orion augmented reality glasses project to spearhead its own consumer hardware and robotics endeavors. Moreover, Tesla has entered the humanoid robotics industry with its Optimus robot, indicating a growing interest in this sector.

Google’s Vision for Robotics

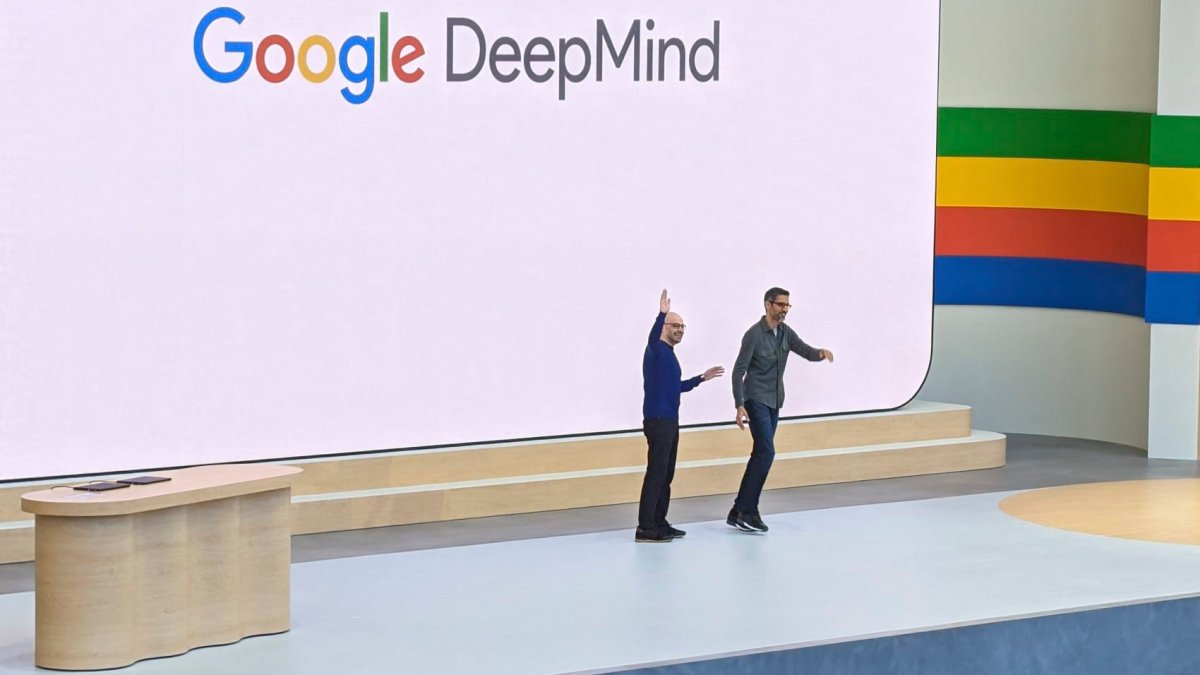

In a statement on social media, Google CEO Sundar Pichai emphasized that the company views robotics as an important testing ground for applying AI innovations in real-world settings. The robots powered by Gemini 2.0 will be able to adapt and make adjustments as they navigate their environments, further exemplifying the potential of AI-enhanced robotics.

As the development of these advanced AI models progresses, it will be interesting to see how they will shape the future of robotics and the impact they will have on various sectors. Their introduction marks a significant step toward merging AI technologies with practical, everyday applications.