Did That Instagram Photo Use AI? Meta’s Ongoing Efforts to Regulate AI Manipulation in 2024

The Emergence of AI-Generated Content on Social Media

Facebook has long been a popular platform for fans to connect with their favorite artists, sharing updates on concert dates, photos, and occasional videos. An Australian teacher named Jake turned to Facebook to follow the acclaimed rock band Nick Cave and the Bad Seeds. In 2023, he encountered a video where Nick Cave promoted a presumably “foolproof” investment opportunity. Feeling inspired by Cave’s stature as a respected artist, Jake decided to take the plunge, saying, “I respect him greatly as an artist, so I’m, of course, thinking that’s put the icing on the cake.” Unfortunately for Jake, the video turned out to be fake and he was scammed out of AUD 130,000.

Jake’s experience exemplifies a growing concern over AI-generated content being used to mislead individuals, especially when popular celebrities are involved. Scammers have begun using AI technology to create convincingly real videos and images, making it easy for unsuspecting fans to fall for these scams.

Meta’s Response to AI-Generated Content

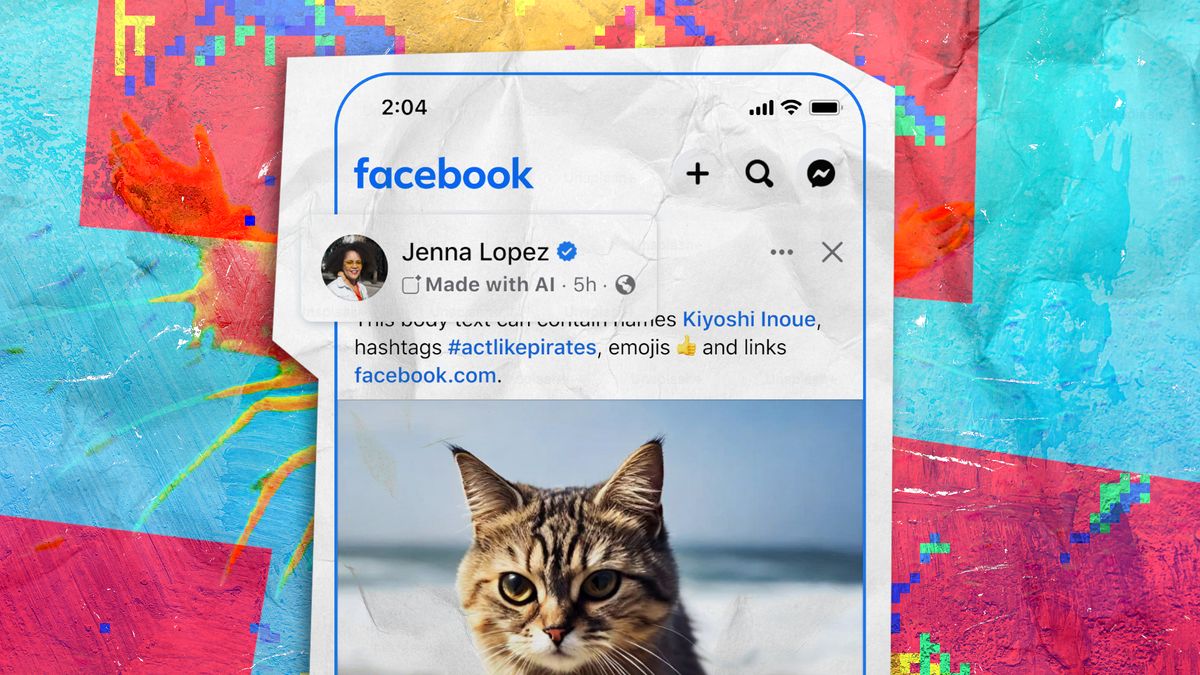

Recognizing the challenges posed by such fraudulent content, in February 2024, Meta announced a new feature aimed at labeling AI-generated materials on Facebook. This initiative came too late for Jake, but it holds promise for helping users identify potential scams in the future.

Details of the AI Labeling Feature

The labeling feature includes a text prompt displayed above multimedia content that identifies whether it contains AI elements. The adjustments made by Meta throughout the year include:

- **April**: Meta expanded its AI labeling policy, stating it would no longer remove AI-manipulated content unless it violated community standards. The types of content that would receive AI labels were also broadened to include videos and audio.

- **May**: Meta refined its policy to specify that it would label “organic content,” which refers to user-generated content incorporating AI.

- **July**: The label was revised from “Made with AI” to “AI info.” This shift aimed to cover a broader range of AI-related content, although it made the specifics about AI usage less clear.

- **September**: Meta decided that if content was merely modified with AI and not fully created with it, the AI label would be hidden in the post’s menu. Users are required to label images correctly or face penalties, particularly if they profit from their accounts.

The Effectiveness of Labeling AI-Generated Content

Many people believe that labeling AI-generated content can help reduce confusion and address issues related to misinformation. Research has shown that some terms might be more effective than others in catching users’ attention and helping them understand AI technologies better. Nonetheless, the increasing prevalence of deepfakes and AI-generated media raises ongoing questions about how effectively these labels can mitigate misinformation risks.

Despite the intention behind the labeling feature, concerns remain about the possibility of AI-generated content being used for deceitful purposes, such as scams seen previously. In September 2024, an investigation found that scammers had leveraged AI-generated photos of military personnel to deceive individuals on Facebook, mirroring Jake’s unfortunate encounter. This highlights the continuous struggle Meta faces in combating misuse of AI technology on its platforms.

As Meta fine-tunes its AI detection tools and labeling processes, the potential for AI-related scams persists, leaving users vulnerable until additional measures are successfully implemented.