Gemini Update Enables Live Camera Insights from Google

Google Introduces Real-Time AI Video Features with Gemini

Google has begun to roll out Gemini’s groundbreaking real-time AI video capabilities. This new functionality allows the platform to analyze visual inputs from a user’s device screen or smartphone camera to deliver contextual responses. This enhancement comes nearly a year after "Project Astra," the foundational technology behind these features, was first demonstrated at Google I/O 2024.

Key Features of Gemini’s AI Video Capabilities

Screen Reading Functionality

One of the primary features newly introduced in Gemini is its ability to read and interpret content displayed on a user’s device. For example, reports from users, including a notable one from Reddit, revealed that the functionality appeared first on a Xiaomi smartphone. This user shared a video illustrating how Gemini can analyze screen content effectively.

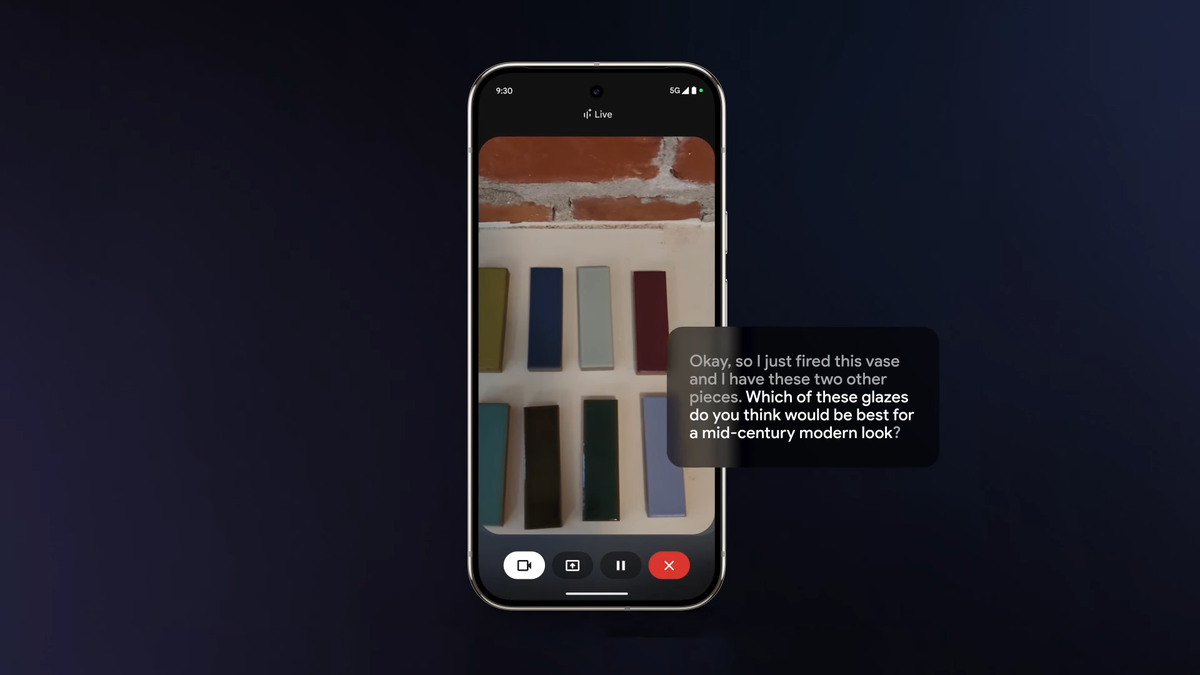

Live Video Processing

The second major feature leverages the smartphone’s camera to process a live video feed. This allows the system to understand the user’s immediate environment and respond to inquiries regarding it. This innovative approach facilitates a more interactive and responsive user experience.

Recent Updates to Gemini

The deployment of real-time AI features is part of a series of updates intended to enhance the functionality of Gemini. Prior to this update, Google introduced the Canvas feature, which assists users with tasks related to writing and coding. Additionally, new tools for podcast summarization were made available. These updates position Google significantly ahead in the field of AI assistant technology, especially when compared to competitors like Apple, Samsung, and Amazon.

Progress and Future Prospects

Although the current features represent a significant leap, they might not encompass the full extent of what Project Astra can achieve, as demonstrated in earlier previews. The original presentations revealed capabilities like memory retention, where the AI could remember items it had seen through the camera and provide updates on their location or details.

Many observers believe that the rapid development of these features suggests an exciting future for AI assistants. The pace at which Gemini is expanding its capabilities leaves users eager to see what more Google has in store during the upcoming Google I/O conference.

In Summary

Google is clearly making significant strides with the introduction of real-time AI video capabilities in its Gemini platform. With features like screen reading and live video feed processing, Gemini is set to redefine how users interact with their devices. As developments in AI continue to accelerate, the integration of these advanced functionalities indicates a shift toward more intuitive and responsive technology in our daily lives.

For more detailed updates about AI advancements or specific functionalities, users can refer to Google’s official channels or trusted tech news sources.