Meta AI Creates Compact Language Model for Mobile Applications

MobileLLM: A New Frontier in Efficient AI Models

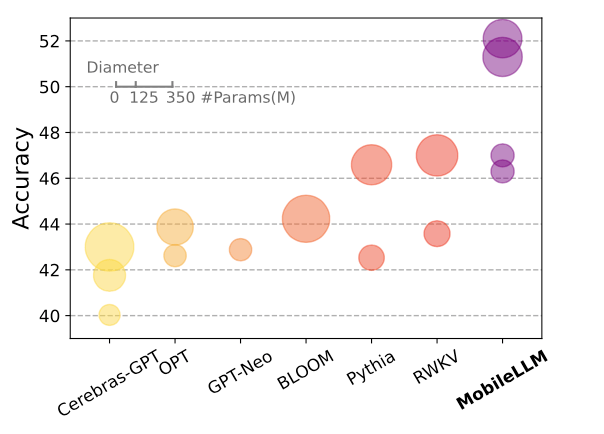

Meta AI researchers recently introduced MobileLLM, a new approach aimed at producing efficient language models tailored for smartphones and devices with limited resources. Published on June 27, 2024, this research challenges the prevailing belief that larger models are inherently more effective.

Key Features of MobileLLM

The research team, which includes experts from Meta Reality Labs, PyTorch, and Meta AI Research (FAIR), concentrated on designing models with fewer than 1 billion parameters. In contrast, leading models like GPT-4 boast more than a trillion parameters. This significant shift changes the landscape for AI deployment on consumer devices.

Innovative Aspects of MobileLLM:

- Depth Over Width: Emphasizing a deeper model structure enhances capability without increasing size.

- Embedding Sharing and Grouped-Query Attention: These techniques improve performance and resource efficiency.

- Block-Wise Weight Sharing: This novel method optimizes memory usage and computation.

Thanks to these strategic choices, MobileLLM managed to eclipse previous models of a comparable size by achieving performance improvements ranging from 2.7% to 4.3% on standard benchmark tasks. While these figures might appear modest, they represent significant advancement in the intensely competitive domain of language model development.

Performance Insights

One of the remarkable findings was that the MobileLLM version utilizing 350 million parameters matched the performance of the much larger 7 billion parameter LLaMA-2 model on specific API calling tasks. This discovery suggests that in certain applications, smaller models can deliver similar functionalities while consuming notably fewer computational resources.

(Image credit: “MobileLLM: Optimizing Sub-billion Parameter Language Models for On-Device Use Cases”, Zechun Liu et al., Meta)

Growing Trends Towards Efficiency

The development of MobileLLM aligns with a broader trend in AI focused on efficiency. As advancements in extremely large language models show signs of tapering off, there is a growing impetus for exploring compact and specialized designs. MobileLLM stands out in this landscape for its focus on efficiency, specifically tailored for on-device deployment. It fits within the emerging category often referred to as Small Language Models (SLMs), despite its designation as an LLM.

Open-Source Access and Future Potential

While MobileLLM is not yet available for public applications, Meta has generously open-sourced the pre-training code, allowing the research community to benefit from this advancement. As technology progresses, we could see advanced AI capabilities becoming more feasible on personal devices. However, the timeline for widespread adoption and the specific functionalities that will emerge remain uncertain.

Breaking the Size Myth

The launch of MobileLLM signifies a crucial shift in the perception of AI model effectiveness. The findings challenge the assumption that superior language models must be large, thereby opening up new pathways for AI applications on personal devices. As researchers continue to innovate, we may witness a broader adoption of efficient AI solutions that emphasize accessibility and sustainability.