Meta AI Unveils COCONUT: A Revolutionary Approach to Machine Reasoning Featuring Continuous Latent Thought Processes and Enhanced Planning Abilities

Understanding Large Language Models and their Limitations

Large Language Models (LLMs) rely on extensive datasets to mimic reasoning and problem-solving capabilities, but they primarily operate within a textual framework. While this often allows clear communication, using natural language as the sole method for reasoning can lead to inefficiencies. This is because natural language is designed for storytelling and sharing information, rather than for rigorous logical processes. Research in neuroscience suggests that when people reason, they may not consistently engage areas of the brain responsible for language. This opens the door to exploring alternative methods that allow LLMs to reason without tipping into language’s limitations.

Challenges with Language-Based Reasoning

One significant drawback of language-centered reasoning methods is that they are not very efficient in computation. As LLMs navigate reasoning chains, a large portion of the tokens they use are more about remaining fluent than contributing to genuine reasoning tasks. This inefficiency becomes even more pronounced when the reasoning jobs increase in complexity or when multiple possible solutions need to be explored at once. Furthermore, existing models tend to lock into a single path too soon, making it difficult for them to backtrack and consider other alternatives. This rigidity severely reduces their potential when faced with dynamic or exploratory types of problems.

The Chain-of-Thought Method and its Limitations

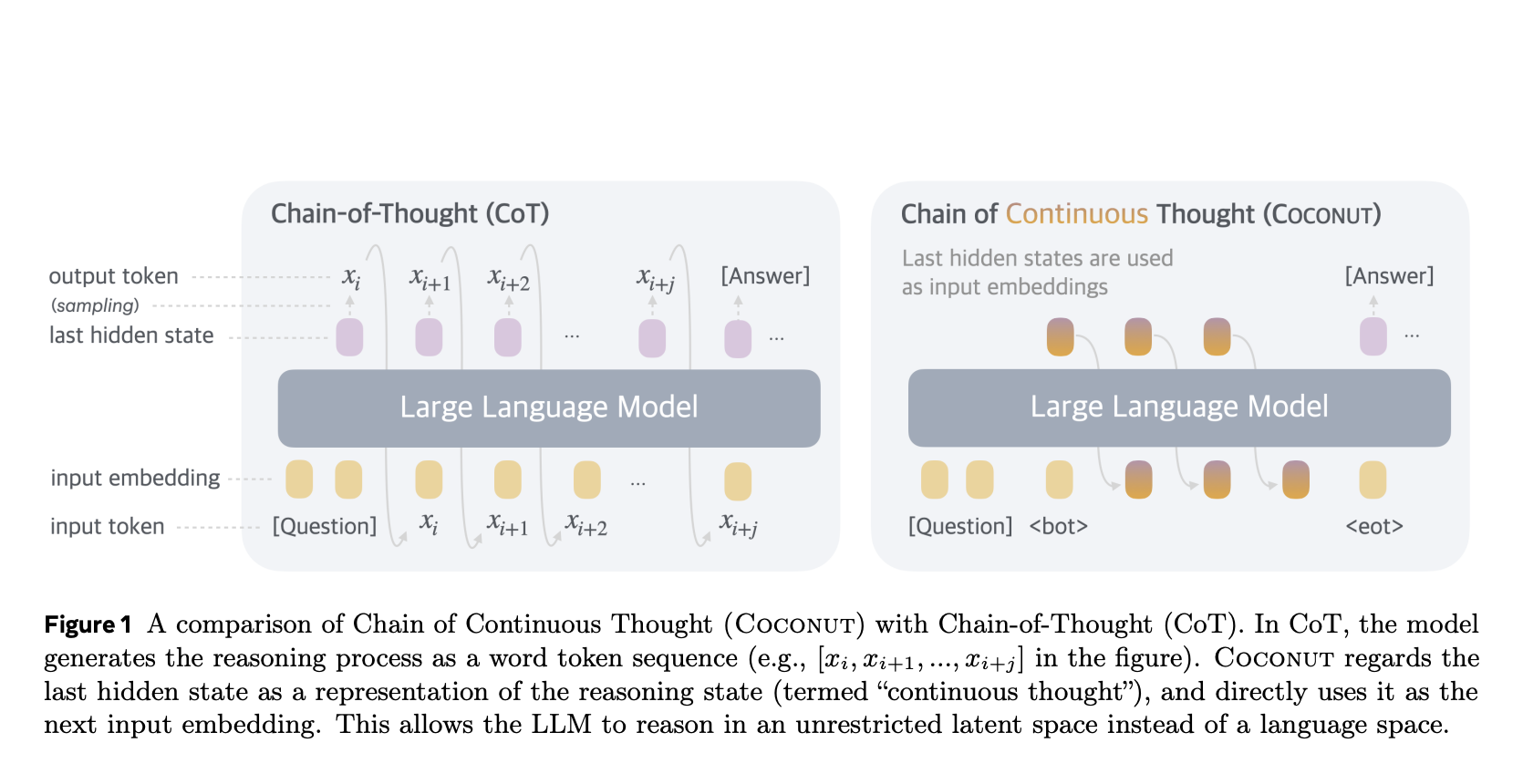

The Chain-of-Thought (CoT) approach has emerged as a strategy designed to mitigate these inefficiencies. By encouraging LLMs to outline their reasoning through step-by-step language outputs, CoT aims for greater clarity and accuracy in problem-solving. However, it is still constricted by the limitations of natural language. CoT struggles particularly with tasks that demand complex planning or thorough exploration of possibilities. Recent advancements have attempted to incorporate latent reasoning strategies, which facilitate computations that do not rely on verbal outputs. Yet, these innovations still lag in terms of versatility and reliability compared to traditional language-based methods when confronting a range of tasks.

Introduction of COCONUT: A New Paradigm for Reasoning

Researchers from Facebook AI Research (FAIR) and UC San Diego have introduced COCONUT (Chain of Continuous Thought) to address some of the challenges inherent in prior models. COCONUT shifts the focus away from language constraints by enabling reasoning in a more flexible latent space. Instead of representing reasoning states as word tokens like in traditional CoT, it utilizes the last hidden layer of an LLM to convey the reasoning state as a continuous representation known as "continuous thought." This method allows the model to handle reasoning processes more efficiently while exploring multiple solution routes simultaneously.

Training Methodology of COCONUT

COCONUT trains through a multi-stage process designed to refine its latent reasoning capabilities. It alternates between modes that use language and those that utilize latent representations. During the later stages of training, COCONUT gradually shifts to exclusive use of continuous thoughts instead of language chains for solving problems. This strategy resembles a breadth-first search approach, which enables the model to assess numerous reasoning paths at the same time before homing in on the best solution. Such flexibility is crucial for successfully navigating complex scenarios requiring careful planning and decision-making.

Performance and Validation of COCONUT

COCONUT has been validated using three distinct datasets:

- GSM8K – A benchmark for mathematics reasoning.

- ProntoQA – A dataset focused on logical reasoning.

- ProsQA – Attributes complex planning over graph structures.

Initial results indicated that COCONUT outperformed traditional CoT models in both accuracy and efficiency. For instance, it achieved 99.9% accuracy in logical reasoning tasks on the ProntoQA dataset, compared to CoT’s 98.8%, and generated fewer reasoning tokens during inference. On the ProsQA dataset, COCONUT clearly excelled in tasks that required detailed planning, affirming its ability to minimize resource usage while maintaining high performance levels.

Key Features of COCONUT

The innovative aspects of COCONUT can be summarized as follows:

- High Accuracy: COCONUT achieved impressive accuracy rates, hitting 99.9% in logical reasoning and 42.9% in mathematical reasoning.

- Efficient Computation: The model produced fewer reasoning tokens during evaluations, making it computationally efficient.

- Flexibility in Reasoning: It mimics the breadth-first search technique to explore various potential solutions, avoiding premature commitments.

- Robust Training Process: Its gradual training method allows COCONUT to tackle progressively challenging problems while sustaining notable accuracy.

- Diverse Capability: COCONUT excels in a variety of reasoning tasks, from solving math problems to logical reasoning involving graph structures.

With these enhancements, COCONUT sets a new standard for machine reasoning, potentially transforming how AI systems handle complex problems.