Meta’s AI Leader: DeepSeek Demonstrates AI Advancements Go Beyond Hardware

DeepSeek Revolutionizes AI with Unique Approach

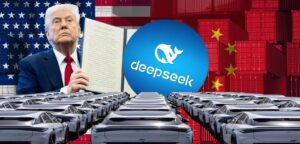

This week, DeepSeek, a Chinese AI startup, has gained significant attention by unveiling a series of AI models that have been trained on hardware that is considerably less powerful than what Western AI companies, like OpenAI, typically use. This development has raised eyebrows in the investment community, causing a sell-off of technology stocks as investors expressed concerns about the potential overvaluation of companies such as Nvidia.

LeCun’s Insights on the Sell-Off

Yann LeCun, a prominent figure in AI research and the recipient of the Turing Award, characterized the market reaction as a "major misunderstanding." He emphasized that DeepSeek’s success stems from its innovative methods in enhancing training efficiency rather than merely venturing into more advanced hardware. In a detailed LinkedIn post, LeCun pointed out that DeepSeek has effectively utilized open research and open-source tools, such as PyTorch and Llama from Meta, to achieve its groundbreaking results.

“To those who interpret DeepSeek’s performance as a sign that China is outpacing the U.S. in AI, I suggest you look again. The reality is that open-source models are now outshining proprietary ones,” LeCun stated.

The Role of Open-Source in AI

LeCun is a staunch advocate for open-source AI, and he believes that the future of artificial intelligence lies in enhancing model architectures rather than investing heavily in sophisticated hardware alone. He referenced his own JEPA concept for self-supervised learning and noted how DeepSeek has built upon existing work to create innovative solutions.

“DeepSeek leveraged previously published open-source work, contributing to advancements that everyone can benefit from. This illustrates the immense potential of open research,” he remarked.

During discussions at the World Economic Forum in Davos, LeCun argued that open-source AI is essential for developing diverse systems capable of understanding and resonating with people across various languages and cultures.

Market Reactions and Industry Dynamics

The significant drop in technology stocks appears to be largely influenced by the revelation that DeepSeek’s open-source DeepSeek-V3 model was developed with a relatively modest budget of just $6 million. This contrasts sharply with the billions spent by technology giants such as Microsoft, OpenAI, Oracle, xAI, and Google on high-end AI infrastructure.

Investors became concerned when they realized that this Chinese startup, operating on a fraction of their budgets, could outperform them in terms of AI capabilities. In a subsequent post on Threads, LeCun reiterated that the drop in stock prices stemmed from a fundamental misunderstanding regarding the nature of AI investments.

Clarifying the Costs of AI Infrastructure

LeCun explained that most of the billions invested by major companies are directed toward infrastructure needed for inference rather than training. Operating AI services efficiently for billions of users demands substantial computational resources, especially as AI systems evolve to include capabilities like video understanding and reasoning, which, in turn, leads to rising inference costs.

“The critical question is whether users will be willing to pay enough to make these investments worthwhile, either directly or indirectly,” he concluded.

Shifts in the AI Landscape

The developments surrounding DeepSeek have sparked debates about the future trajectory of AI technology and funding. With the clear advantages presented by open-source models, it is evident that the landscape of AI is undergoing significant transformation. The conversation around funding, infrastructure, and the importance of research accessibility is set to evolve as innovators like DeepSeek continue to challenge established norms in the technology sector.